Enhancing the Retail Experience with Actlyzer

- Fujitsu’s intelligent behavioral analysis technology delivers in-store context marketing

April 7, 2022

JapaneseIn online stores, context marketing has been widely adopted, delivering the right contents such as emails and advertisements to customers, by analyzing the “context” in their psychologies based on their access history and personal information.

In the case of physical stores, it is much more difficult to obtain the context such as the shopping experience, interest in products that were not purchased, and the individual consumer needs and wants within the group (e.g., friends and family).

At Fujitsu, we have developed a relationship-sensing technology that recognizes the relationship between persons and objects from in-store videos, identifying the complex behavior (e.g., returning a product to the shelf after picking it up) and the relationship with surrounding people. It enables us to analyze complex consumer behavior by obtaining the context based on the purchasing and non-purchasing behavior psychology in physical stores. In addition, we have developed an environmental sensing technology that recognizes environments with different store layouts and camera locations, thereby significantly reducing the number of man-hours required for installation and operation.

These newly-developed technologies enable the automated, large-scale collection of customer contexts in physical stores, enabling continuous, large-scale context marketing. An improved customer experience is a direct result, including optimal customer service and better sales floor operation.

Understand the context from actual behavior: Why a consumer bought an item, as well as why they didn't

Actlyzer, an AI technology for video-based behavioral analysis developed by Fujitsu Research, recognizes approximately 100 kinds of basic human actions and combines them in order to recognize complex behavior. As well as combining the actions themselves and the changes in the time series, we have developed a relationship-sensing technology that further extends Actlyzer’s functions. It recognizes many different video scenes, such as people’s attributes, relationships between people, and the relationships between people and objects, expressing these as action scene graphs.

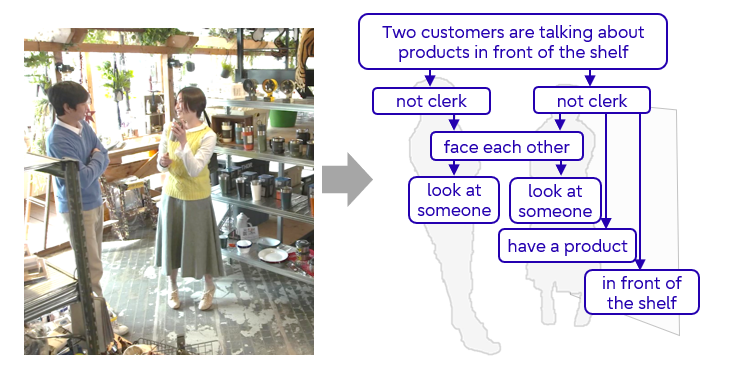

In general, AI-based behavioral analysis technology focuses on the actions of a single person. However, in situations where two people are talking about a product in front of the shelf, it needs to be able to interpret mutual conversational actions as well as the positional relationship between the individuals and the shelf. The relationship-sensing technology recognizes not only individual people but also the relationships between people, objects, and the environment based on the action scene graphs. It can recognize scenes in real time not only within a single frame but also in multiple frames, using edge devices installed in the field.

Our system does not use and record identification information such as a person’s face, even if cameras capture this information. However, in actual operation, additional privacy protection may be necessary depending on relevant national and local legal systems or cultures.

Figure 1 Example of an action scene graph expressing relationships between people

Figure 1 Example of an action scene graph expressing relationships between people

The following two videos show recognition examples of the relationship between several people from their in-store behavior, based on the scene graph.

Scene 1: Shopping behavior inside a shop

Scene 1 is an example of recognizing the action “One consumer was willing to buy and picked up the product, but after talking to the other, they returned it to the shelf and did not buy it. ”. Actlyzer recognizes a series of actions, from taking the product from the shelf and returning it from the change in image features between the customer's hand with respect to the shelf area. This enables Actlyzer to record non-purchase situations and use them to improve sales floors.

Scene 2: Customer service using a consumer behavioral model

In Scene 2, a store sales assistant delivers a service to the customer at exactly the right moment, using information about their growing desire to purchase based on the association of their behavior and psychological state. There are several specific psychological states before a customer makes a purchase: Attention, Interest, Desire, and Action. Various consumer behavioral models, such as *AIDA, AIDMA, and AIDCA, already exist. But with our relationship-sensing technology, we can now capture a wider variety of situations, ranging from purchase to non-purchase and record them as useful and relevant context.

*AIDA, AIDMA, and AIDCA laws: A model of the psychological states leading to consumer purchasing decisions.

AIDA : Attention ⇒ Interest ⇒ Desire ⇒ Action

AIDMA : Attention ⇒ Interest ⇒ Desire ⇒ Memory ⇒ Action

AIDCA : Attention ⇒ Interest ⇒ Desire ⇒ Conviction ⇒ Action

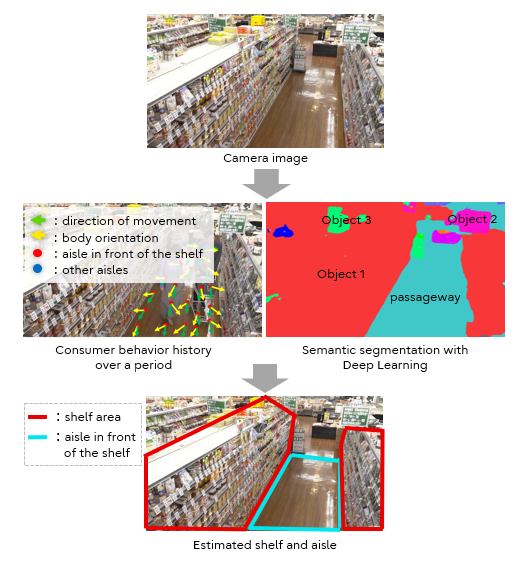

Sales floor layout recognition to improve AI deployment efficiency

In physical stores, the layout of product shelves and the location of cameras differ from store to store, and layout changes occur. Actions such as “reaching a product on the shelf” can be recognized by specifying the area of the shelf in the video. However, if there are many stores and multiple cameras in the store, the man-hours involved in specifying the overall area can be a serious obstacle to the deployment. With our environmental sensing technology, we overcome this challenge by estimating the area of the product shelf shown in the video. We do this by installing a single camera for a specific period of time, and monitor consumers’ actions. Our technology recognizes the shelf area and the aisle in front of the shelf by integrating customers’ shelf-specific behaviors in front of shelves (for example “many customers pass looking at the shelf”), with object area segmentation using Deep Learning in order to automate area designation. This makes it easier to expand the system to multiple stores with the same basic context analysis, as well as to respond to changes and the expansion of sales floor layouts.

Figure 2 Environmental sensing technology

Figure 2 Environmental sensing technology

The view from the development team

Development Team Members from the Advanced Converging Technologies Laboratories, and the Software Technology Business Unit.

-

Shun Takeuchi

-

Sho Iwasaki

-

Atsunori Moteki

-

Yoshie Kobayashi

-

Takahiro Saitou

-

Yuka Sugimura

-

Genta Suzuki

Our goal is to set new standards of excellence in the field of AI image recognition, presenting papers at the world’s top conferences and demonstrating our technologies as top performers at the most important benchmark competitions. We are also working to demonstrate its performance through real-world retail sector implementations. While our technologies are designed to solve the problems of conventional behavior analysis technology in physical stores, they also have considerable potential for other fields such as manufacturing and public services. Our mission is to conduct R&D, solving pressing social issues through our cutting-edge technologies.

Looking to the future

We are continuing a number of field trials, and aim to put our technologies to practical use by the end of this fiscal year, as the behavioral analysis component of the GREENAGES Citywide Surveillance solution. In addition, we are actively promoting the development of converging technology that integrates humanities- and social sciences-based knowledge, recognizing the needs of potential customers.

Related links

・Fujitsu Technical Computing Solution GREENAGES Citywide Surveillance [Fujitsu Global Site] ![]()

・Fujitsu Develops New "Actlyzer" AI Technology for Video-Based Behavioral Analysis [Fujitsu Global Site] ![]() (November 25, 2019 Press Released)

(November 25, 2019 Press Released)

For more information on this topic

fj-actlyzer-contact@dl.jp.fujitsu.com

Please note that we would like to ask the people who reside in EEA (European Economic Area) to contact us at the following address.

Ask Fujitsu

Tel: +44-12-3579-7711

http://www.fujitsu.com/uk/contact/index.html![]()

Fujitsu, London Office

Address :22 Baker Street

London United Kingdom

W1U 3BW