The damaging impact of AI trained with biased data

The rise of AI’s popularly is accelerating, with applications including advanced image recognition and highly predictable analysis based on past data – tasks that were previously only performed by humans. It is true to say that as the scope of AI expands across society, our lives are becoming ever more convenient, from improved business systems to faster, better services for consumers. But this convenience can come at a price in terms of ethical issues: AI’s decision-making can be adversely influenced by a variety of factors, including for example biased data and algorithms.

Yuri Nakao, a researcher from the Trusted AI Project at Fujitsu Laboratories, explains, “AI is data-driven technology. The data itself is not neutral, as it reflects the records of past decisions based on human bias, and events in society involving a degree of discrimination. If AI is trained with this data, it can lead to judgements with inherent irrational prejudices such as racial and gender discrimination.”

Ramya Malur Srinivasan, a member of the AI Ethics Group at Fujitsu Laboratories of America (FLA), says, “Machines cannot understand the relationship between cause and effect, although humans can. This is one of the reasons why prejudice can occur."

Ramya uses the problem associated with a specific AI system as an example - the "Crime occurrence prediction AI" in the United States, where advanced technologies are increasingly being used. This system is built on crime-based information such as the time, location, and case summary. It is now causing considerable controversy in the US, where it has been adopted by some police departments as a technology for effective police officer deployment.

“This system uses an algorithm based on criminal history data, so it could create a discriminatory bias. Whether utilizing such data is appropriate or not in order to estimate crime rates has become a major topic of debate,” explains Ramya.

There is growing evidence of many similar cases. One example is an AI system developed by Amazon in the United States to enable more efficient staff recruitment. Unfortunately, this system proved to discriminate against women, underrating their performance due to a lack of women’s data in the original training data. Amazon has subsequently stopped using this system.

Today, there are many situations where AI may be making biased decisions, and there is an urgent need to apply ethical decision-making and improve AI.

"To avoid discrimination in AI decisions, we should first prevent unfair biases from being included in AI models and datasets." comments Yuri.

Harnessing international research expertise from Japan, the U.S., and Europe

The safe and secure implementation of AI in society is fundamental to a DX company such as Fujitsu. Our mission is to deliver business value not only to our customers but also to our customers’ customers (B2B2C). In 2020, Fujitsu was one of the first companies in Japan to announce guidelines for the use of AI with the "Fujitsu Group AI Commitment", as well as establishing the AI Ethics Research team. This team’s research activities now extend beyond Japan to encompass Europe, and the United States as well.

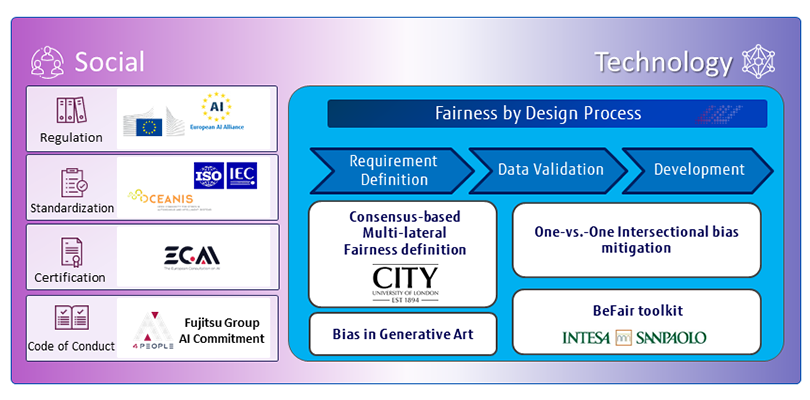

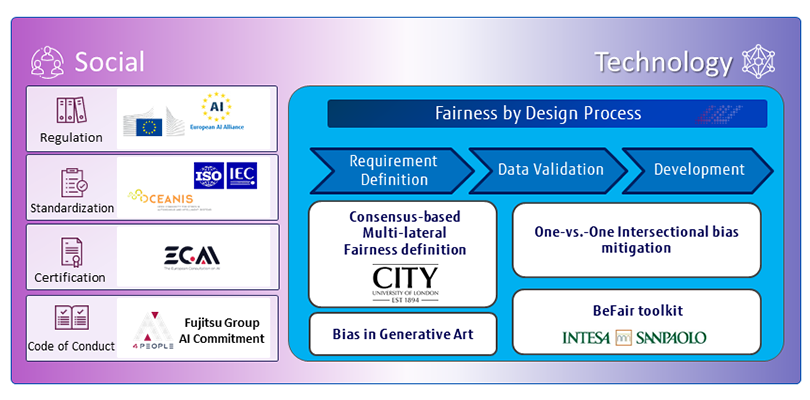

Europe has been pioneering the need to regulate AI, and Fujitsu has taken a leading role in this activity since 2018, promoting AI ethics as a founding partner of the "AI4People" global forum on the social impacts of AI. Fujitsu is also a member of the European Council for Artificial Intelligence (ECAI), the EU AI Alliance and OCEANIS *1.

In the United States, the hub of cutting-edge technology development, Fujitsu’s researchers have focused principally on "Explainable AI (XAI)", a concept first proposed by the Advanced Research Projects Agency (DARPA) in 2017.

Fairness, accountability, and transparency represent the building blocks of the ethics behind AI. Fujitsu’s "Fairness by Design" approach focuses on ensuring fairness from the outset, at the initial design stage. Yuri is conducting research to extract fairness requirements, collaborating with the City, University of London, saying,

“One of the challenges of AI ethics is that answers to the question, 'What is fairness?', vary from culture to culture. This is why we are conducting research that incorporates cultural diversity into ethics."

*1: An international forum for the discussion by organizations interested in standards for the development of AI systems. Its establishment was proposed by IEEE-SA

Eliminating Discriminatory Bias with Data Correction Technology

We established our AI Ethics Research Team in 2020 to unite and integrate the research and development activities being undertaken in three different countries. As a result, we have successfully enhanced and accelerated our research output.

One example involves the results of some important workshop studies in our joint research project with the City, University of London. The workshops were held in Japan, the United States, and the U.K., and the wide-ranging discussions between the many different potential customers produced some exciting new perspectives. Interestingly, we also identified an important difference in judging fairness between Japan, the United States, and the U.K. in a lively debate about using AI for financial loan screening. Yuri was responsible for organizing this workshop, explaining,

“In the United States and the United Kingdom, the concept of fairness is typically considered to represent 'equal treatment regardless of gender or race'. On the other hand, in Japan, it is regarded as 'equal treatment regardless of position, skills, or financial status'. Fairness standards have been designed mainly in Europe and the United States, and currently, there is no framework that covers the various and differing fairness standards. Our workshop revealed the differences between Western and Japanese cultures, and I think it is very important to take the idea of diversity into account when we start thinking about the definition of fairness in a specific situation.”

The team has two basic R&D approaches to resolving data unfairness. The first is a technological one, involving "One-vs.-One Mitigation" technology as the basis for mitigating discriminatory bias and responding to the fairness requirements associated with certain problems, particularly that of “Intersectional Bias”. A single attribute such as "Female" or "a Black person" may not cause bias, but once multiple attributes are combined, such as "Female Black person”, a significant discriminatory bias occurs. "In an experiment conducted by our team, we were able to eliminate discriminatory biases while maintaining the accuracy of the model,” confirms Yuri.

"BeFair" is a toolkit developed by Fujitsu Laboratories of Europe (FLE), together with an Italian bank, Intesa Sanpaolo, a member of AI4People. This toolkit analyzes actual loan review data and implements fairness (on the system). Beatriz San Miguel Gonzalez, a member of the Artificial Intelligence & DA Research Group, explains how it works, "It evaluates data model fairness from a variety of metrics and compares mitigation techniques.”

Research Activities on AI Ethics in Fujitsu Laboratories.

Research Activities on AI Ethics in Fujitsu Laboratories.A Bottom-Up Culture that inspires Original Research

The second R&D element revolves around the social perspective, combining social experiences, and social science, ”Our work at Fujitsu laboratories stands out in that we identify novel ethical issues with AI at a very early stage. Fujitsu was probably the first company to take cultural differences into account, while many others are only now just starting to work on AI ethics,” states Ramya, who is engaged in research activities in the United States, which is a highly advanced AI country.

She is also researching Generative Art, referring to art created with the use of AI, which enables her to approach the issue from an entirely new angle.

“Let’s use the example of an app that transforms an uploaded image of a face into a picture, as if it were a Van Gogh painting. It processes the skin colors automatically and changes the skin tone to white, because its training data is based on portraits from the Renaissance period,” Ramya explains. Her unique research has been recognized in the international arena, with her paper presented in the "ACM Conference on Fairness, Accountability, and Transparency", staged by the Association for Computing Machinery (ACM).

All three researchers agree that diversity and a bottom-up culture in the team has helped to generate their unique research activities, leading to the Fairness by Design concept. Original ideas, however, don’t come without their challenges. "It can be very difficult for computer science researchers to grasp ethical issues such as fairness and diversity, (as they are outside of their usual field,)” says Yuri. He studied both natural and social sciences at university and graduate school, before going on to focus on social issues in IT.

Ramya, with her experience in the field of electronic communications in India, received a PhD in the United States and joined Fujitsu Laboratories after graduation. She had previously been working in healthcare and XAI fields, before becoming involved with AI ethics.

As a researcher, Ramya explains her motivation, “This is not something that is limited to AI ethics, but is universal. Once someone identifies an important topic, it encourages lots of people to working on it at the same time, resulting in research being conducted in a short period of time and papers being published. As a result, the technology in that area will rapidly mature. It can be hard and frustrating sometimes to maintain a high level of interest in the process, but it's also fun.”

Beatriz, who lives in Spain, studied software engineering with a user-centric approach during her school days. After achieving her PhD, she worked as a business analyst for a financial institution. She confirms that these experiences are a strong factor in her current joint research activity in the banking sector.

"A knowledge of computer software is essential for research, but you also need to take the social point of view into account. Nevertheless, it's not always easy to understand the complex equations for AI ethics,” says Beatriz with a wry smile.

The Next Steps to solving social problems

Fujitsu Laboratories is continuing to refine its Fairness by Design process in order to realize and ensure fair AI systems in the future.

“We took what we learned in the workshop and used this to develop a GUI to evaluate the results produced by AI. As the next step, we plan to investigate the differences in recognition between individual loan examiners and data scientists,” says Yuri. “IEEE and ISO are moving towards the standardization of the AI life cycle. If all goes well, it will become the norm to develop AI systems that can maintain and ensure AI ethics.”

Fairness by Design can be applied not only to fairness, but also to other areas such as accountability. "Data privacy in AI is also an important issue, so I want to expand my research more and more." Beatriz says enthusiastically.

Ramya is also looking at the bigger picture. “When it comes to AI ethics, we have to support people in developing countries. We also need to think about it in the context of environmental issues, such as CO2 emissions from the vast computing resources that run our AI programs.”

Fujitsu, by advocating "Human Centric AI", will continue to accelerate its research and development activities in this field, using the unique perspectives of its expert researchers on social issues as the starting point.