Archived content

NOTE: this is an archived page and the content is likely to be out of date.

Big Data and Deep Analytics Now with a Next Generation Server: The Fujitsu M10

The amount of real-time data that businesses need to manage is growing at an alarming rate, whether it's traditional business data or digital data like YouTube videos, photos, and tweets. Today, data is too big, is moving too fast, and is in too many formats to manage, let alone analyze. The explosive growth of Big Data presents enormous challenges to businesses because conventional server technologies were not designed to quickly process, analyze and link the massive amounts of data now available to us.

In response to the Big Data challenge, Fujitsu developed the Fujitsu M10 server that successfully combines innovative supercomputer and mainframe technologies with the legendary reliability of Oracle's Solaris operating system and database software technologies.

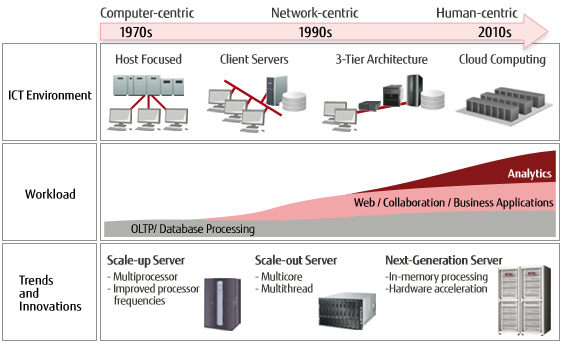

The Evolution of ICT and Server Technologies

As information and communications technology (ICT) continues to progress at a rapid pace, we are witnessing a paradigm shift from technology-centric to human-centric computing, where computing resources are provided to humans anywhere and at any time.

In the computer-centric era, stable system operation was given the highest priority, and growth followed a scale up approach where more system resources are assembled into a single node with high reliability and improvements to performance. Scale up was supported further by higher and higher frequencies and multicore processors.

In the network-centric era, computing became ubiquitous as web use increased. Scale out computing, where more nodes are added to a system, was introduced and characterized by high throughput and distributed computing.

We have entered the human-centric era, in which business insights are derived from large amounts of data analyzed in real-time. Human-centric computing requires real-time processing of enormous amounts of diverse data using scale up and scale out technologies.

Solving ICT Challenges with Big Data and Deep Analytics

Scale Up

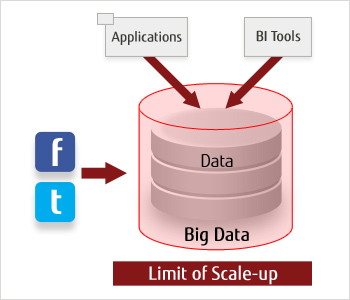

Scale up servers are typically used to support mission critical real-time transactional workloads and databases which require high performance, scalability and high reliability with near 100 percent uptime. Transaction integrity and data consistency are of paramount importance. Processing capacity can be increased by adding new resources such as CPU, memory or storage to the server without incurring downtime.

Analyzing Big Data and business data using scale-up servers.

Scale-up servers are able to ingest large volumes of data. Large memory and processing capacity allow entire data sets to be loaded into memory for extremely fast, near real-time predictive analysis.

The challenge for scale-up servers is that the data volume, especially when working with continuously growing Big Data resources, can rapidly outgrow the capability of a single server. Additional growth and performance improvements are only possible by moving to a new server of higher capacity.

Scale Out

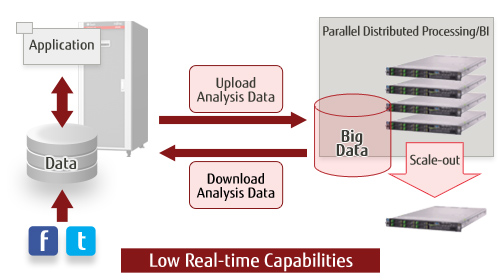

Scale out systems respond to growing workloads by effectively introducing more servers and distributing load. Scale out systems' strengths typically benefit web processing, application servers and batch processing of analytics. With careful development, deployment, and management, scalable application performance and improved reliability can be realized with scale out systems.

However, there are limitations to application performance gains as the system size and capacity are increased. Application and infrastructure development as well as maintenance can quickly get more challenging as the number of servers increases, escalating the costs and diminishing the return of investments.

Analyzing Big Data and business data using traditional scale-out servers.

Business analytics workloads on traditional scale out systems typically feature large amounts of data aggregated over time in a batch mode that is then analyzed to produce a forecast. Big Data analysis involves processing that markedly differs from business analytics workloads on traditional scale out systems. The same data is not replicated on separate servers and then processed in parallel. Big Data must be partitioned, distributed and analyzed over many separate servers, and then the results must be consolidated. Clearly, this type of system is not ideal for real-time analysis. In addition as different servers process different data sets, the failure of any one server leads to significant reliability concerns.

Fujitsu M10: Dynamic Scaling

Can a single platform provide virtually unlimited performance and capacity growth while supporting real-time analysis, forecast and decision making?

Yes. The Dynamic Scaling features included in the Fujitsu M10 server family have been developed specifically to address the challenges of Big Data processing and deep analytics resulting in accelerated business growth and innovation.

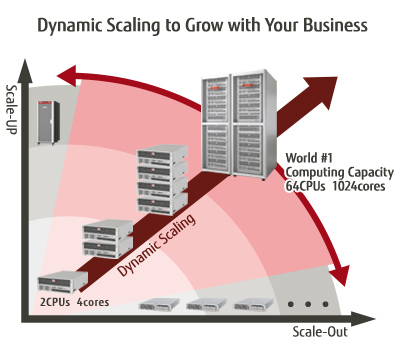

Dynamic Scaling in Fujitsu M10 servers combines the large transaction and analytics processing power of scale up with the capacity growth and economic benefits of scale out. Fujitsu M10 servers start with high performance processing capabilities and robust mission-critical features honed from years of mainframe, supercomputer and business computing experience. Dynamic Scaling adds core activation flexibility, building block hot expansion and no-cost virtualization to ensure that the capacity of the system grows with the perfect granularity to match business demands.

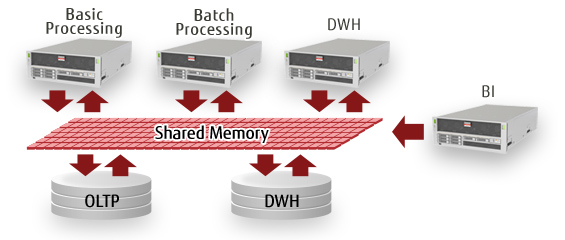

By meeting the combined challenges of enterprise transaction processing, Data Warehousing, Business Intelligence, and Business Analytics in a single scalable infrastructure with Fujitsu M10, businesses can effectively and efficiently use real-time processing capabilities across their entire enterprise and Big Data assets.

Today and Tomorrow with Dynamic Scaling Fujitsu M10 Servers

As analytics have become increasingly more important, ICT has focused on extracting deep insights in real-time to enable users to make better decisions and ultimately create greater value for their business and society.

With the Fujitsu M10's advanced architecture, a single infrastructure can provide virtually unlimited performance and capacity growth and support real-time analysis, forecast and decision making.The Fujitsu M10 server with Dynamic Scaling combines the advantages of scale up and scale out, making it easy to grow with the business in real time.

In a society where the role of ICT continues to expand, Fujitsu continues to innovate.