Archived content

NOTE: this is an archived page and the content is likely to be out of date.

From simple perception to a collection of human-centric intelligence: the AI A*STAR is aiming for

With the emergence of deep learning and other AI technologies, and AI applications appearing in our daily lives, including autonomous driving, the AI boom is accelerating worldwide. In Singapore, the Agency for Science, Technology and Research (A*STAR) is also advancing AI research under a programme entitled the “A*STAR AI Initiative.” Here is an excerpt from a lecture by Dr Kenneth Kwok, Principal Scientist at the Institute of High Performance Computing, and Program Manager of the A*STAR AI Initiative, introducing the frontline of AI research at Fujitsu World Tour Asia Conference 2017 in Singapore which was held on November 2, 2017.

When the field of AI was born at the Dartmouth Summer School in 1956, it sought to replicate human intelligence and learning abilities in computers. More than 50 years on that has turned out to be much more difficult than first believed. General artificial intelligence is nowhere near human levels, but AI has succeeded by being more circumscribed, focused on narrow problems. For example, current AI technology based on deep learning is good at discovering meaningful data from big data sets, for performing tasks like classification and prediction.

Deep learning triggered the current AI boom

There have been previous AI booms and this is said to be the third one. Previous successes included the expert systems of the 70s and 80s, and neural networks and backpropagation learning in the 1990s, before the current advances brought about by deep learning and big data.

Dramatic demonstrations of AI technology, such as IBM’s Watson triumphing over human champions in the U.S. quiz show “Jeopardy!”, and computer programs beating humans, including U.K.-based DeepMind AlphaGo’s unexpected victory over the human Go world champion, have created much buzz. Recently, Deep Learning has been shown to be extremely good at tasks which had previously stumped computers, such as perceiving speech and images. An examples of AI that has benefited consumers is speech-recognition software, including Apple's Siri, Amazon's Alexa and Google's Google Assistant, which can now be found in smartphones and voice interface devices.

Exhibiting strength in speech and image recognition; AI is typically used in medical applications and infrastructure maintenance

A*STAR in Singapore is also advancing AI research. Take speech and image recognition, for example.

A*STAR’s work in speech technology includes machine translation (focusing on English, Chinese and other Southeast Asian languages) and voiceprinting for speaker identification.

The English speech recognition technology developed by the Institute for Infocomm Research (I²R) has been shown to outperform the current state-of-the-art technology. It won the 2015 ASpIRE (Automatic Speech Recognition in Reverberant Environments) Challenge, an IARPA Project run by the United States Department of Defense, in which 169 teams from 32 countries participated. In that competition, teams competed in a challenging environment full of resonance. Similarly for Mandarin Chinese, a benchmarking of speech recognition engines against major commercial speech recognition software products, including Nuance from France and Google from the U.S., had I²R’s speech recognition engine perform better by more than 10%.

In computer vision, A*STAR’s technology has been used for autonomous driving, classifying objects and predicting behaviors, including if objects are coming closer or going farther away from the car.

A*STAR is also attempting to see if illnesses can be diagnosed from large volumes of CT images, using Deep Convolutional Neural Network (CNN). The accuracy rate of CNN diagnosing pulmonary nodules is 80%. A*STAR is also trying to see if tumours on tissue pieces can be automatically diagnosed by the CNN from tissue images. When tissue images were classified into 43 different groups by tissue characteristic, colour and texture, CNN diagnosed the presence / absence of tumours with a 96% accuracy rate.

A*STAR also developed AI to automate human body imaging including that by MRIs. One of the problems in processing MRI images is to identify parts of the human anatomy in images so as to accurately predict feature points such as joints and internal organs. This will allow image analysis parameters to be more accurately adjusted and for the imaging process to be sped up. Another benefit is that human anatomical recognition can help in handling artefacts such as motion blur due to the patient moving during imaging, and occlusion such as when a blanket is placed over parts of the body. A*STAR developed a solution using low-cost depth and thermal sensors and a skeleton dataset of 40K frames which can achieve an accuracy of 2cm, similar to human performance.

Besides the medical field, AI can also be applied to improve processes in engineering and industry. For example, in the marine sector, hull surfaces have to be routinely inspected and this is labour intensive and can also be dangerous, involving physical risks. That is why A*STAR has developed technology for coating surface defect detection. Its virtual defect detection engineer uses deep learning vision technology to inspect internal surfaces of vessels and has accuracy of more than 80%.

Analysis of data other than speech and images

Of course, speech and image recognition is not everything. There are other use cases that require other forms of data analysis. For instance, A*STAR has worked with Rolls-Royce to develop a health-index prognostics framework to assess the health of real and practical induction motors. This is a joint-collaborative effort which led to a publication in the IEEE Transactions of Industrial Electronics in 2016.* It has been used in predicting the useful life of a part or piece of machinery, to reduce downtime due to scheduled maintenance, thereby saving on labour costs as well.

Looking for human-level AI

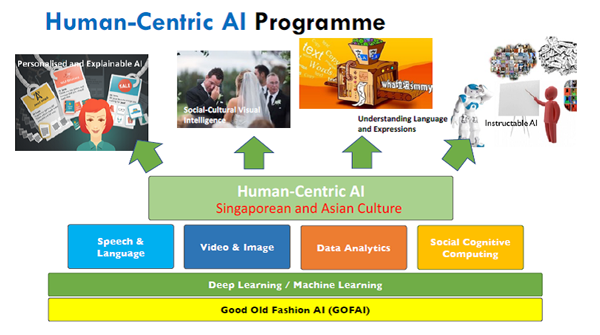

However, A*STAR believes that there is still a big gap between AI and true intelligence. Current AI essentially relies on pattern matching. Whether for object recognition, question and answer, or translation, it essentially learns to match an input to an output, without much understanding in the process. Furthermore, this process is often a black-box, with no explanation of how the answers are arrived at. This is why A*STAR has recently started a programme on Human-centric AI (see Figure), which aims to understand humans, reason for humans and learn like humans.

Fig. 2 Aiming for human-centric AI Source: A*STAR

Fig. 2 Aiming for human-centric AI Source: A*STAR

The human-centric AI that A*STAR is researching will combine machine learning and knowledge to allow machines to have social and cultural intelligence and be able to understand language in a more human-like way. This will result in AI that can behave more appropriately in various social contexts and interact with humans in ways that engender trust. For example, human-centric AI will be able to explain its reasoning to a human user in a way that is appropriate to the user’s needs. So, in the medical domain, an AI will explain a diagnosis differently to a patient from what it would to a doctor. Human-centric AI will also be able to infer situations, human relations and social meanings from videos and images.

All this is still very hard to do but the journey is an exciting one and will continue…

*"Health Index-Based Prognostics for Remaining Useful Life Predictions in Electrical Machines”, Feng Yang, Mohamed Salahuddin, et al. IEEE Trans. Industrial Electronics, 2016

We want to hear from you.