Kawasaki, Japan, March 17, 2017

Fujitsu Laboratories Ltd. today announced the development of technology to integrate and rapidly analyze NoSQL databases, used for accumulating large volumes of unstructured IoT data, with relational databases, used for data analysis for mission-critical enterprise systems.

NoSQL databases are used to store large volumes of data, such as IoT data output from various IoT devices in a variety of structures. However, due to the time required for structural conversion of large volumes of unstructured IoT data, there was an issue with the processing time of analysis involving data across NoSQL and relational databases.

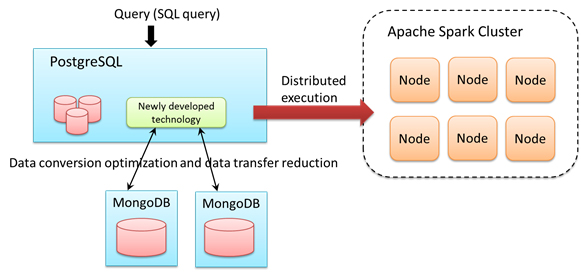

Now Fujitsu Laboratories has developed technology that optimizes data conversion and reduces the amount of data transfer by analyzing SQL queries to seamlessly access relational databases and NoSQL databases, as well as a technology that automatically partitions the data and efficiently distributes execution on Apache Spark(1), a distributed parallel execution platform, enabling rapid analysis integrating NoSQL databases to relational databases.

When this newly developed technology was implemented in PostgreSQL(2), an open source relational database, and its performance was evaluated using open source MongoDB(3) as the NoSQL database, query processing was accelerated by 4.5 times due to the data conversion optimization and data transfer reduction technology. In addition, acceleration proportional to the number of nodes was achieved with the efficient distributed execution technology on Apache Spark.

With this technology, a retail store, for example, could continually roll out a variety of IoT devices in order to understand information such as customers' in-store movements and actions, enabling the store to quickly try new analyses relating this information with data from existing mission-critical systems. This would contribute to the implementation of one-to-one marketing strategies that offer products and services suited for each customer.

Details of this technology were announced at the 9th Forum on Data Engineering and Information Management (DEIM2017), which was held in Takayama, Gifu, Japan, March 6-8.

Development Background

In recent years, IoT and sensor technology are improving day by day, enabling the collection of new information that was previously difficult to obtain. It is expected that connecting this new data with data in existing mission-critical and information systems will enable analyses on a number of fronts that were previously impossible.

For example, in a retail store, it is now becoming possible to obtain a wide variety of IoT data, such as understanding where customers are lingering in the store by analyzing the signal strength of the Wi-Fi on the customers' mobile devices, or understanding both detailed actions, such as which products the customers looked at and picked up, and individual characteristics, such as age, gender, and route through the store, by analyzing image data from surveillance cameras. By properly combining this data with existing business data, such as goods purchased and revenue data, and using the result, it is expected that businesses will be able to implement one-to-one marketing strategies that offer products and services suited for each customer.

Issues

When analyzing queries that span relational and NoSQL databases, it is necessary to have a predefined data format for converting the unstructured data stored in the NoSQL database into structured data that can be handled by the relational database in order to perform fast data conversion and analysis processing. However, as the use of IoT data has grown, it has been difficult to define formats in advance, because new information for analysis is often being added, such as from added sensors, or from existing sensors and cameras receiving software updates to provide more data, for example, on customers' gazes, actions, and emotions. At the same time, data analysts have been looking for methods that do not require predefined data formats, in order to quickly try new analyses. If, however, a format cannot be defined in advance, the conversion processing overhead is very significant when the database is queried, creating issues with longer processing times when undertaking an analysis.

About the Technology

Now Fujitsu Laboratories has developed technology that can quickly run a seamless analysis spanning relational and NoSQL databases without a predefined data format, as well as technology that accelerates analysis using Apache Spark clusters as a distributed parallel platform. In addition, Fujitsu Laboratories implemented its newly developed technology in PostgreSQL, and evaluated its performance using MongoDB databases storing unstructured data in JSON(4) format as the NoSQL databases.

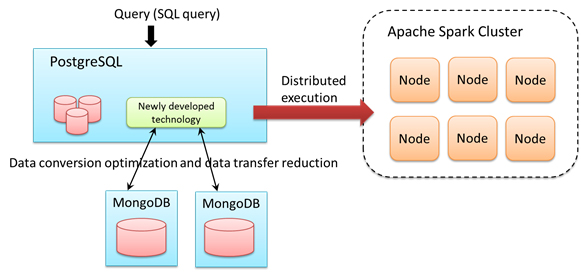

Figure 1: Structural concept of the newly developed technology

Figure 1: Structural concept of the newly developed technology

Details of the technology are as follows:

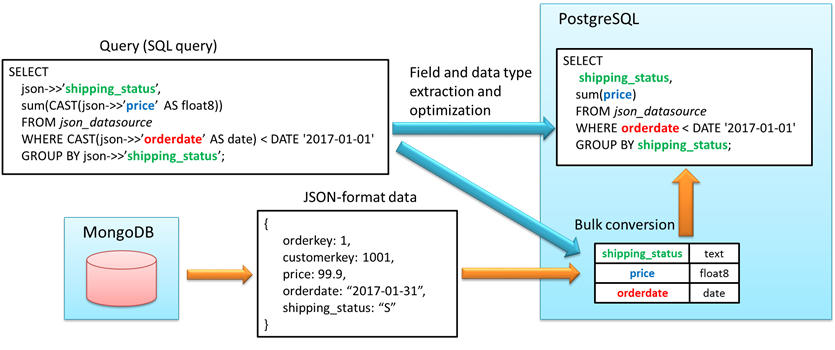

1. Data Conversion Optimization Technology

This technology analyzes database queries (SQL queries) that include access to data in a NoSQL database to extract the portions that specify the necessary fields and their data type, and identify the data format necessary to convert the data. The query is then optimized based on these results, and overhead is reduced through bulk conversion of the NoSQL data, providing performance equivalent to existing processing with a predefined data format.

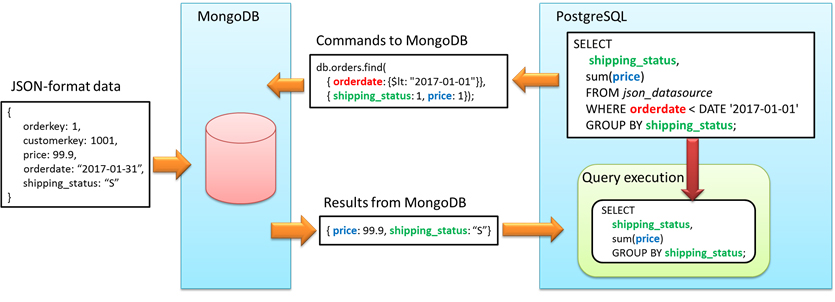

2. Technology to Reduce the Amount of Data Transferred from NoSQL Databases

Fujitsu Laboratories developed technology that migrates some of the processing, such as filtering, from the PostgreSQL side to the NoSQL side by analyzing the database query. With this technology, the amount of data transferred from the NoSQL data source is minimized, accelerating the process.

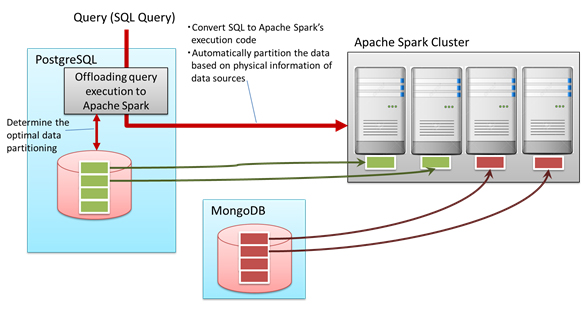

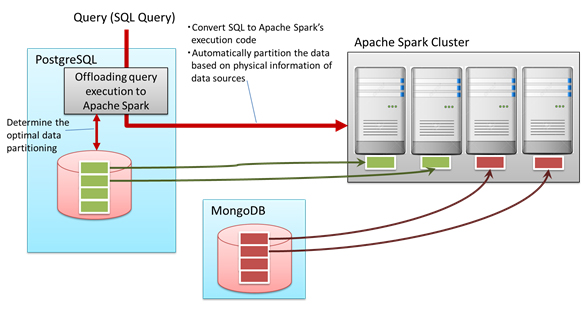

3. Technology to Automatically Partition Data for Distributed Processing

Fujitsu Laboratories developed technology for efficient distributed execution of queries across multiple relational databases and NoSQL databases on Apache Spark. It automatically determines the optimal data partitioning that avoids unbalanced load across the Apache Spark nodes, based on information such as the data's placement location in each database's storage.

Figure 4: Automating distributed execution of Apache Spark clusters

Figure 4: Automating distributed execution of Apache Spark clusters

Effects

Fujitsu Laboratories implemented this newly developed technology in PostgreSQL, and evaluated performance using MongoDB as the NoSQL database. When evaluated using TPC-H benchmark queries that evaluate the performance of decision support systems, application of the first two technologies accelerated overall processing time by 4.5 times that of existing technology. In addition, using the third technology to perform this evaluation on an Apache Spark cluster with four nodes, a performance improvement of 3.6 times that of one node was achieved.

Using this newly developed technology, it is now possible to efficiently access IoT data, such as sensor data, through an SQL interface common throughout the enterprise field, which can flexibly support frequent format changes in IoT data, enabling fast processing of analyses including IoT data.

Future Plans

Fujitsu Laboratories will continue trialing this newly developed technology when applied to large scale Apache Spark clusters, planning for commercial implementation by Fujitsu Limited within fiscal 2017.

![]() E-mail: csi-db@ml.labs.fujitsu.com

E-mail: csi-db@ml.labs.fujitsu.com