Fujitsu Laboratories Ltd. today announced development of lane-departure warning technology that can be applied to a vehicle-mounted wide-angle camera as a drive recorder(1) to promote safer driving.

In recent years, an eagerly anticipated development in efforts to reduce traffic accidents has been the use of the wide-angle lens of drive recorders, which are increasingly being used mainly on commercial vehicles, together with lane-departure warnings, which alert drivers when deviating from a lane. However, typical lane-departure warning technology relies on narrow-angle cameras that only view the white lines marking a lane at considerable distance. Wide-angle cameras that only view some of the lane markers at a short distance do not always detect the lane correctly, and have yet to meet the expected level of performance for warnings.

Fujitsu Laboratories has now developed a driving lane recognition technology that estimates the correct lane configuration for the entire road by smoothly joining multiple images of the road surface, and compensates for the positional deviations relative to road lane markers. The result is a lane-departure warning performance of 95%, which exceeds the level of devices that use narrow-angle cameras, even with wide-angle cameras. This technology adds lane-departure warnings to bring new preventive-safety features to the driving experience without requiring the installation of a separate camera.

Details of this technology are being presented at the Symposium on Sensing via Image Information (SSII2014), opening June 11 at the Pacifico Yokohama.

Background

As part of preventive-safety measures that reduce accidents, lane-departure warning systems that employ front and rear cameras are becoming more and more common, with some countries making them mandatory and others implementing subsidies to promote their adoption. Lane-departure warning systems use forward-looking cameras to prevent drivers from leaving their lanes accidentally. The standard of performance expected of these systems is that they will issue a warning at a distance of 30 centimeters or less from the lane marker, and this has typically been achieved using a dedicated camera with a narrow-angle lens. At the same time, drive recorders are increasingly coming into use as a way to record accidents and promote safe driving. These typically use cameras with wide-angle lenses. In terms of cost, ideally the lane-departure warning system could work without requiring a separate camera, and, in turn, it would be to have the drive recorder's wide-angle camera fulfill the lane-departure warning function with sufficient performance.

Issues

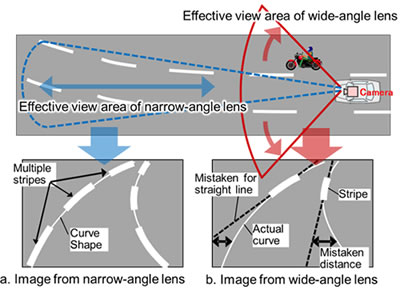

Previous lane-departure warning systems have used a camera with a narrow-angle lens giving a narrow 30° field of view to observe the road surface at some distance. This can reliably recognize a variety of lane configurations, and meets the performance criteria for lane-departure warnings. The wide-angle lenses that drive recorders use have a 130° field of view with poor resolution at long distances, so they can only recognize lane markers at short distances, and cannot accurately recognize lane configurations, so they have not met the performance criteria for lane-departure warnings. In order for a wide-angle lens to meet the standards for lane-departure warnings, the following issues would need to be resolved.

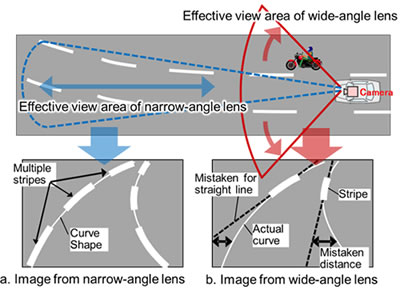

1. Misidentifying lane markers on curving roads

Viewing a curving road through a narrow-angle lens shows multiple dashed white-stripe lane markers along the road (Figure 1a), whereas viewing through a wide-angle lens (130°) only shows what is close, and will only show the nearest pair of stripes left and right (Figure 1b). Because the stripes are only about eight meters long, this is not sufficient to recognize the bend in some roads (depending on the curvature), so a curving road could be mistaken for a straight one, mistaking the distance to the next white lines.

Figure 1: How images from wide-angle lenses produce errors in estimating the shape of curved lanes from lane markers

Figure 1: How images from wide-angle lenses produce errors in estimating the shape of curved lanes from lane markers

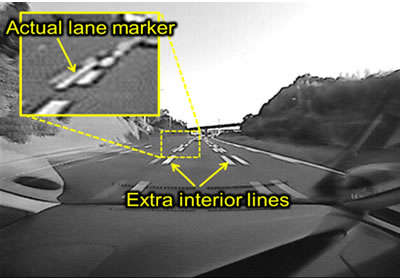

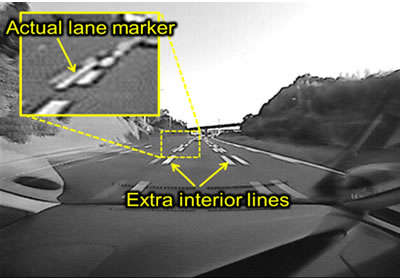

2. Misidentifying doubled lines that serve as speed warnings

Japan uses short, doubled white lines to warn motorists to slow down for sharply curving roads. When viewing these doubled lines through a wide-angle lens, the low resolution of the lens makes it impossible to distinguish the inner speed-warning lines from the outer lines that mark the lane's actual boundaries (Figure 2), so the inner lines are mistaken for the actual lane boundary, erroneously setting off the lane-departure warning.

Figure 2: Multiple lane markers viewed through wide-angle lens

Figure 2: Multiple lane markers viewed through wide-angle lens

About the Technology

Fujitsu Laboratories has now developed a white-line detection technology that compensates for the low resolution that comes with wide-angle lenses by stitching together multiple images over time to create an accurate picture of the entire road, making it possible to accurately estimate lane configuration.

Key features of the technology are as follows.

1. Dashed lines on curved roads

(1) Stitches together multiple images to estimate lane shape (Method 1)

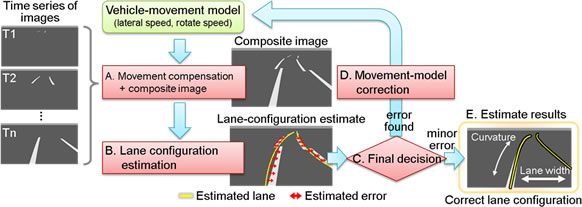

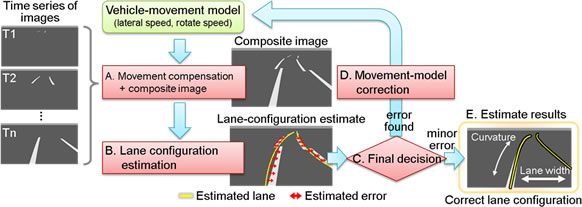

Building on the insight that road shapes do not change significantly in a very short amount of time, this technology models the lane shape by stitching together a time series of images shot every 100 milliseconds, for example, to array white lines correctly. The vehicle's movement introduces some deviation into the positions of the white lines in the images, so simply stitching these images together would not work. Therefore Fujitsu Laboratories developed a technology that simultaneously estimated the vehicle's movement and lane shape to correctly estimate lane configuration (Figure 3).

Figure 3: Lane-configuration estimated with composite image from multiple time slices with vehicle-movement correction

Figure 3: Lane-configuration estimated with composite image from multiple time slices with vehicle-movement correction

Specifically, it works as follows:

- The technology models the vehicle's lateral movement (constant-speed translation) and rotation (constant-speed rotation), and uses the movement model from the time series of white-line images to compensate for movement and produce a composite road-surface image (Figure 3a).

- The technology estimates the lane configuration based on the white lines in the composite image (Figure 3b). If the actual movement differs from the modeled movement, the result will be an estimated deviation as shown in Figure 3b.

- The movement model (for translation speed and rotation speed) is revised to reduce the deviation until the estimated deviation is acceptably small (Figure 3d), and the process iterates again from Figure 3a until it results in a correct estimate of the lane configuration that compensates for vehicle movement (Figure 3e).

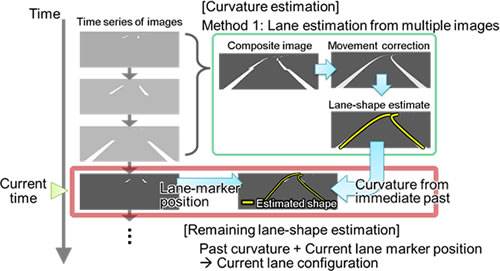

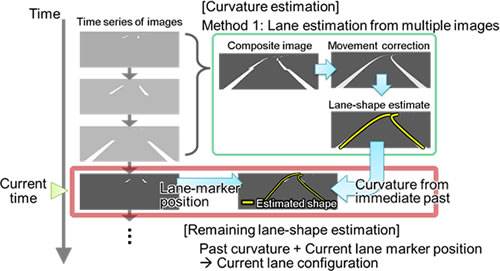

(2) Estimating parameters based on time differences (Method 2)

The lane-estimating process needs to work in real time for each image, or else the lane-departure warning will come too late. Method 1 above can reliably recognize lanes, including curves, but using multiple images over time is an inherently intermittent process, making it difficult to achieve genuine real-time performance. With most lane configurations, an understanding of the curvature of the road will enable an estimate from a single image at every time increment. Using Method 1 just to calculate curvature with multiple forward-looking images from the immediate past (Figure 4), Fujitsu Laboratories developed a method for estimating the remaining lane-shape parameters (such as road width and vehicle heading) from the lane markers in the current image, which differs in time from the curvature-estimation timing. As road curvature does not change significantly over a short period of time, there's little loss in accuracy from estimating lane configuration based on the most recent images. This method can be used to estimate lanes in real time at each time slice.

Figure 4: Method for estimating parameters from time differences

Figure 4: Method for estimating parameters from time differences

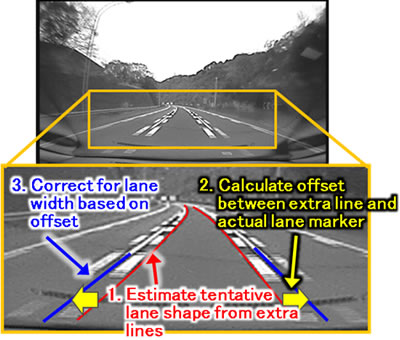

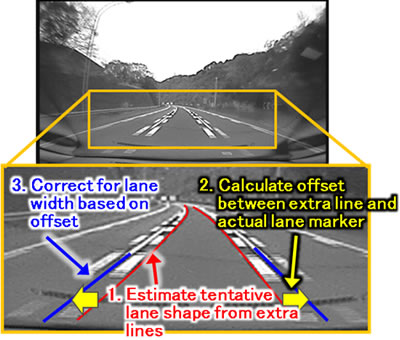

2. Using doubled lane markers by correcting lane width

When there are doubled lane markers, there are the normal lane markers as well as extra markers parallel to them and they are inboard by a fixed distance. This insight means that a tentative line configuration can be estimated using the extra inboard lines. Normal lane markers look bigger as the vehicle moves closer to them, so this technology uses the offset measured between the measured extra stripes and the regular lane markers to correct the lane width and make an accurate estimate of lane configuration (Figure 5).

Figure 5: Using doubled lines to correct for lane width

Figure 5: Using doubled lines to correct for lane width

Results

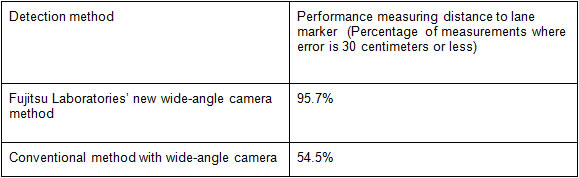

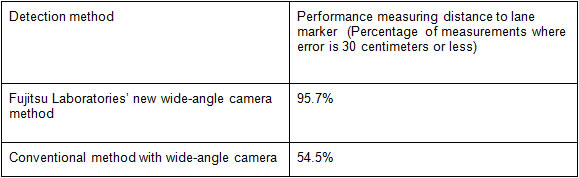

When assessed using 160 minutes of actual camera imaging data, this technology was found to result in performance accuracy of 96%, roughly double that of existing methods, and meeting the standards of performance for measuring distance to lane markers (measurement errors of 30 centimeters or less) (Figure 6).

Figure 6: Performance evaluation on measuring distance to white lines

Figure 6: Performance evaluation on measuring distance to white lines

Furthermore, lane-departure warning performance was evaluated over 460 lane changes, and lane departures were correctly identified with 95% accuracy.

This technology was found to reliably detect lane markers even using a wide-angle camera, and can use common drive recorder cameras to satisfy the requirements for lane-departure warning performance with low-cost equipment.

Future Plans

Fujitsu Laboratories aims to bring this technology into practical use in fiscal 2014 so that it can be used in lane-departure warning systems in drive recorders. Moreover, in addition, to its use in warning drivers as an enhancement to motoring safety, Fujitsu Laboratories is developing this technology for other applications, possibly including its use in analyzing operating risk from lane departures as captured by drive recorders.

![]() E-mail: ms_ldw@ml.labs.fujitsu.com

E-mail: ms_ldw@ml.labs.fujitsu.com