beijing, April 01, 2015

【Background】

The traffic condition has normal state or abnormal state. Abnormal state will reduce the traffic efficiency heavily, and should be monitored. As the number of vehicles increasing rapidly, some abnormal states (traffic accident, traffic jam, and traffic violation etc., called anomalies) happen more frequently. Some kinds of anomalies are difficult to be detected with traditional transportation devices, such as loop detectors.

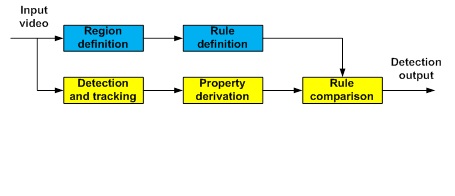

More and more surveillance cameras are used in the transportation application for monitoring purpose. It will be efficient and in low cost if these cameras can be used for anomaly detection. Traditional surveillance video based anomaly detection methods are just designed for limited scenes, with single type of incident, and in low detection precision. With an efficient framework consisting of components of road region definition, traffic rule definition, detecting and tracking vehicle and non-vehicle objects, object property derivation, and rule comparison, our technology is possible to be used for all traffic scenes, with all types of anomalies, and in high precision.

【Topics】

The following problems exist in traditional algorithms or existing products:

1) Most methods are just to solve single type of anomaly, for example, to detect traffic accident, to detect traffic jam, or to detect traffic violation only. For each type of anomaly, the method has only limited application cases. For example, the accident cases are limited in lane change, stopped vehicle, slow vehicle, and fallen object, etc.

2) Most of them are tested and applied in simple scenes, with few vehicles or few accidents. So the detection precision is low and false detection rate is high for commonly existing traffic scenes.

These kinds of methods are not applicable to the cross with complex traffic condition. Therefore, we developed an efficient technology of traffic anomaly detection, which works robustly in both simple and complex traffic conditions, with high detection precision, and runs in real time with software implementation.

【Technology】

The surveillance video based anomaly detection system consists of components of road region definition, traffic rule definition, detection and tracking for vehicle and non-vehicle objects, object property derivation, and rule comparison, as shown in Figure 1.

Figure 1 Anomaly detection framework

The video is input from surveillance camera, which should be installed in a high place in order to watch multiple sections at a cross, as shown in Figure 2. In this way, one camera can monitor all the directions at a cross. It is efficient and in low cost.

Figure 2 Video input example

With the input video, the road regions of each lane, zebra crossing, cross central area, and prohibited area are defined. The property of the road region is represented with an integer of 16 bits, which has the value from 0x0 to 0xFFFF.

For each region, specific rule is defined according to traffic sign and traffic rule. For example, at the lane of turning left, the rule is set as turning left only, and going straight prohibited.

For the anomaly detection, there are two kinds of objects to be detected, i.e., vehicles and non-vehicles. Vehicles include big size (truck, bus, van, etc.) and small size (car, motor, etc.). Non-vehicles include pedestrians, human with transportation tools (bicycle, scooter, etc.), and animals. For each kind of objects, they are in two states: stationary or moving.

It is easy for many methods to detect moving objects with motion information, but not easy to detect stationary objects with just motion information. Some methods, for example, training and recognizing car with machine learning, can be used for stationary car detection with color, texture, or edge information. But they are restricted in training scenes, with fixed camera parameters (installation position, angle, focal length, etc.), with limited lighting and weather conditions.

We use a novel foreground object detection method to detect not only moving cars and people, but also the stationary cars in queue. Moreover, the method can detect both big size and small size objects. The detection example is shown in Figure 3.

Figure 3 object detection example

The system starts from any video frame, by subtracting the current frame from the background image. When extracting background image, ghost objects appear in two cases. In the first case, when a moving object becomes stationary, it will be adapted into the background. Then when it starts to move again sometime later, there will be a ghost left behind. In the second case, an existing object that belongs to the background starts to move (e.g. stopped vehicle) and will also cause a ghost problem. In our algorithm, when a moving object becomes stationary, it will not be adaptively merged into the background. Compared with other methods, the first ghost phenomenon does not happen in our algorithm. Our algorithm consists of a working model and several light-weighted monitoring model. When a background object starts to move, the background contamination will be detected by monitoring models, and related background area will be reinitialized. So the second ghost phenomenon can also be dealt with.

In order to get some property from the detected objects, these objects need to be tracked. We keep the regions of object passing as the trajectory information.

With the tracking result, we can compare the trajectory information with the traffic light information. For example, if the traffic light is red, no objects are allowed from straight regions to central regions, and also no turning left. The traffic light information can be input from outside control device or can be got by recognizing from camera, if the camera can see the traffic light clearly.

【Result】

(1) Anomaly detection precision: >95%

(2) Real time processing with SW, 30fps@1920x1080 (Intel Xeon 3.2GHz, 4GB memory).

(3) The vehicle and non-vehicle object detection result is shown in Figure 4, and the anomaly detection example is shown in Figure 5.

Figure 4 Vehicle and non-vehicle object detection result

Figure 5 Anomaly detection example

【Future Plans】

In 2015, we will do field tests of this technology with surveillance camera installed in high altitude, and try to cover more other scenes with high detection ratio. Meanwhile, this technology will be promoted both in China and world-wide. For the implementation, the technology can be software running on servers in the surveillance system to process single or multiple ways of video. We can also embed it in image processors at the surveillance camera side.

【Note 1】

Fujitsu Research & Development Center Co., Ltd.: Chairman Shigeru Sasaki. Location: Beijing, China

![]() Phone: +86-21-6335-0606

Phone: +86-21-6335-0606![]() E-mail: zhmtan@cn.fujitsu.com

E-mail: zhmtan@cn.fujitsu.com